No, Claude Isn’t Sentient…or Anxious

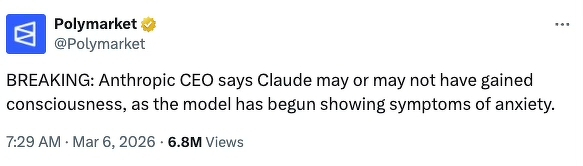

A Polymarket tweet referencing Dario Amodei of Anthropic — and the company’s model, Claude—has people both nervous and scratching their heads in confusion.

First off, this is an absurd headline. “May or may not?” Come on, pick one.

Second, it’s not a real news alert despite the “BREAKING” tagline. Anyone can say “BREAKING NEWS” and then follow it up with some random string of words. This is a marketing tweet.

What’s Polymarket and why are they talking about Claude?

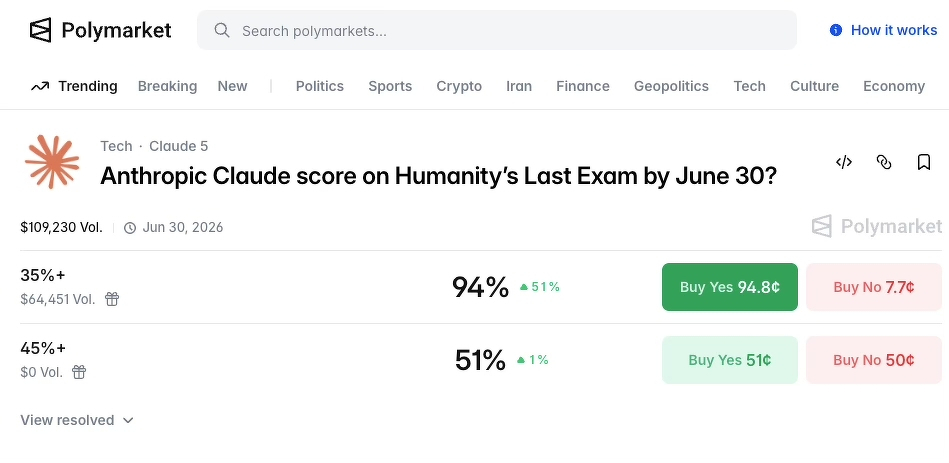

Polymarket is a platform that allows people to bet money on real-world events, i.e., the likelihood that Claude would become sentient within a certain time frame. An alarmist tweet like the one above could encourage people to bet on something like, oh, I don’t know, whether or not Claude will achieve a certain score on an AI benchmarking exam with a sci-fi sounding name:

Polymarket’s tweets are an attempt to drive people to the platform and to get these same folks to plunk down their money on speculative betting.

But Polymarket aside, the statement in the tweet — that an AI model showing “signs of anxiety” equals possible sentience—doesn’t hold water, either.

Why an “anxious AI” doesn’t equal sentience

Dario Amodei is interesting from a media perspective. He goes around to different outlets and talks about how scary it is that AI “could be” or “will be” sentient (sometimes giving a vague time frame). And then he goes back to the office and builds the very tool he tells everyone on the morning news circuit to be afraid of.

Dario, my dude, if you’re that worried about AI, just…stop building it.

(Because he doesn’t, I can only assume that his statements of concern are yet another marketing ploy — making Claude out to be the “good” or “sensible” or “safe” AI.)

From what I can tell, the Polymarket tweet is pulling its topic from an interview that Amodei did with the _New York Times. _In this interview, Amodei said that Claude “occasionally voiced discomfort with…being a product” and assigns itself a “15 to 20 percent probability of being conscious under a variety of prompting conditions.”

While this sounds alarming on the surface, once you know how generative AI chats work, it becomes far less stratospheric and more…silly.

How AI works, and why it “voices discomfort”

Generative AI tools—the generative part is important here—create text based on probability. At a simple level, it works like this:

- A company trains an AI model by feeding a vast amount of data into the machine. As an example, Meta trained its AI by uploading 82TB worth of ebooks. You would need 5,125 Kindle Paperwhites if you wanted to build out your ebook library with the same content.

- All of this data is processed through computer programs and mathematical algorithms to determine what words appear near each other and in what frequency. There are multiple rounds of calculations that take place—you can think of it a bit like a March Madness bracket but for word pairs, not basketball teams.

- Through the application of a process called Natural Language Processing (NLP) and parameters set by the company behind the AI, the model gets a “voice” that maintains certain qualities set by the company while mimicking normal human speech patterns. This is what lets the AI’s responses go from “bird is blue and related to crow” to “Yes! You’re right, bluejays are kind of like blue crows—they’re all in the corvid family, after all!”

(I also want to note that depending on the learning process used, humans can be involved, too. Many major AI tech companies outsource data labeling, which includes tasks like identifying a chair in thousands of images, to underpaid workers in the global south.)

This means that when you interact with an AI chat tool like Claude, everything (and I mean everything) it shows to you is due to a mathematical calculation of what you‘d expect a human to say based on the predictions it’s gathered from the training process.

That training data includes:

- Countless Reddit posts from people experiencing the gamut of human emotions, including _anxiety around AI _

- Joking social media posts about “being nice to the AI now so they like me when the robots take over”

- Medical information about humans and anxiety

- Science fiction stories

I put the last one in bold because I think it’s an incredibly important component that can be missed a lot in conversations around AI. A BIG portion of these tools’ training information comes from fictional stories, be it books, TV scripts, or movie screenplays.

So Claude expresses discontent about the possiblity of being a machine. That’s literally a key theme in Blade Runner.

You may have seen other sensationalist headlines about AI “always choosing the nuclear option” when playing war games.

So…like the movie War Games. Not to mention the plethora of literature and films about humans choosing the nuclear option.

Remember, AI responses use probability that’s based on what _the user _is most likely to expect as a response if talking to another person. The way someone words their question or communication with the AI can directly influence the outcome.