What to Do When AI News is Scary

Reporting around AI news and developments might be making you feel … nervous.

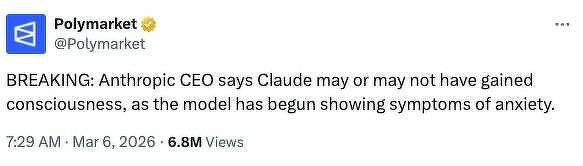

And that’s exactly how the creators of these tools, their leaders, and even the media outlets publishing headlines like “Claude may or may not be sentient!” want you to feel.

The more nervous you feel, the more you’ll click to read. The more time you’ll spend on page. And if you don’t push back on such vague or outlandish claims-which is hard to do thanks to the way such headlines are structured-then the value of these AI companies rises and rises in terms of valuations, sale prices, and stocks.

But here’s the thing: any time someone talks about artificial general intelligence (AGI) or “possible” AI sentience, they’re engaging in marketing-speak.

Here’s what I want you to remember:

- Fear of AI, of the “robots taking over,” has been a narrative in science fiction entertainment for decades.

- AI tools are trained on lots of fictional content, including a library’s worth of science fiction. Their mathematical, predictive structures make responses in line with sci fi texts common.

- AGI is, in many cases, defined by AI companies as a financial reporting metric not actual achievement of machines replicating and exceeding complete human intelligence.

- Headlines are meant to drive clicks. (Nope, search engine optimization isn’t dead; it’s alive and well. I have a whole business built around it, though I don’t engage in sensationalist click-driving articles.)

The next time you come across a headline about AI that makes you want to throw your hands up and live in the woods far away from anything remotely robotic, take a step back and do these checks.

AI News Check #1: Who’s behind the publication of this headline?

- News outlet: There’s a good chance there’s some sensationalism involved.

- Scientific journal: There could be some truth here; give the paper a read.

- Corporate social media post: Unless you’re connected to a leading AI research firm, don’t take this at face value.

AI News Check #2: Who are the main sources?

- AI company leader: There’s a good chance this person is saying something specific, even if not entirely true, to drive up company valuation or generate media interest during a slump.

- AI investor: This person likely wants or needs their target companies to rise in value, so they’re also trying to drive up its worth and create media interest.

- AI researcher: This could be based in truth. Some researchers are working for corporations, though, so check out their credentials.

AI News Check #3: Are the associated claims quantitative or quantifiable?

Quantitative claims involve numbers, statistics, data, and hard facts. Qualitative claims are more based in feeling.

If I’m trying to market a new dish soap, I will need some quantitative claims about how well it works (“cuts grease 2x faster than leading competitor”) but I’ll also need qualitative information about how test audiences felt when using the product. This helps me shape my marketing campaign.

When you’re presented with a headline like the one pictured below, it’s easy to have a gut reaction that is as if you’ve read a quantitative fact. But this is an entirely qualitative statement-”may or may not,” “begun showing.” There are no facts, figures, or even definitive statements in this. It’s sensationalist for marketing purposes.

- It’s posted by a corporate account, one that has a vested interest in Claude achieving certain performance levels on an upcoming intelligence benchmark test (people who use Polymarket can bet on the outcome of said test).

- It’s quoting, and not even directly, an AI company CEO.

It’s hard to not be overwhelmed and alarmed by the amount of sensational AI “news” passing through our feeds everyday. We already absorb way more information than the human brain can really handle before lunch. But if you can, take a breather and run through those checks.

Or just close your browser and ignore the offending headline. That’s okay too.